Khung quản lý rủi ro AI

- Thứ hai - 12/08/2024 05:59

- In ra

- Đóng cửa sổ này

AI Risk Management Framework

Theo: https://www.nist.gov/itl/ai-risk-management-framework

Vào ngày 29/04/2024, Viện Tiêu chuẩn và Công nghệ quốc gia - NIST (National Institute of Standards and Technology) đã phát hành một ấn phẩm phác thảo dựa trên Khung Quản lý Rủi ro AI - AI RMF (AI Risk Management Framework) để giúp quản lý rủi ro của AI tạo sinh (Generative AI). Bản thảo AI RMF Generative AI Profile có thể giúp các tổ chức nhận diện các rủi ro riêng biệt do AI tạo sinh đặt ra và đề xuất các hành động để quản lý rủi ro AI tạo sinh phù hợp nhất với các mục tiêu và ưu tiên của họ. Được phát triển suốt năm qua và lấy đầu vào từ nhóm công tác công về AI tạo sinh của NIST với hơn 2.500 thành viên, các trung tâm hướng dẫn về một danh sách liệt kê 12 rủi ro và hơn 400 hành động các nhà phát triển có thể sử dụng để quản lý chúng. Nhiều thông tin hơn có ở đây.

Vào ngày 30/04/2024, NIST đã đăng tải thông tin đối chiếu giữa Khung quản lý rủi ro AI của NIST (AI RMF) và Hướng dẫn AI dành cho doanh nghiệp của Nhật Bản (AI GfB.)

Cộng tác với các khu vực công và tư, NIST đã phát triển một khung để quản lý tốt hơn các rủi ro cho các cá nhân, tổ chức, và xã hội liên quan đến trí tuệ nhân tạo (AI). Khung Quản lý Rủi ro AI của NIST (AI RMF) dự kiến để tự nguyện sử dụng và nâng cao khả năng kết hợp các cân nhắc đáng tin cậy vào trong thiết kế, phát triển, sử dụng và đánh giá các sản phẩm, dịch vụ và hệ thống AI.

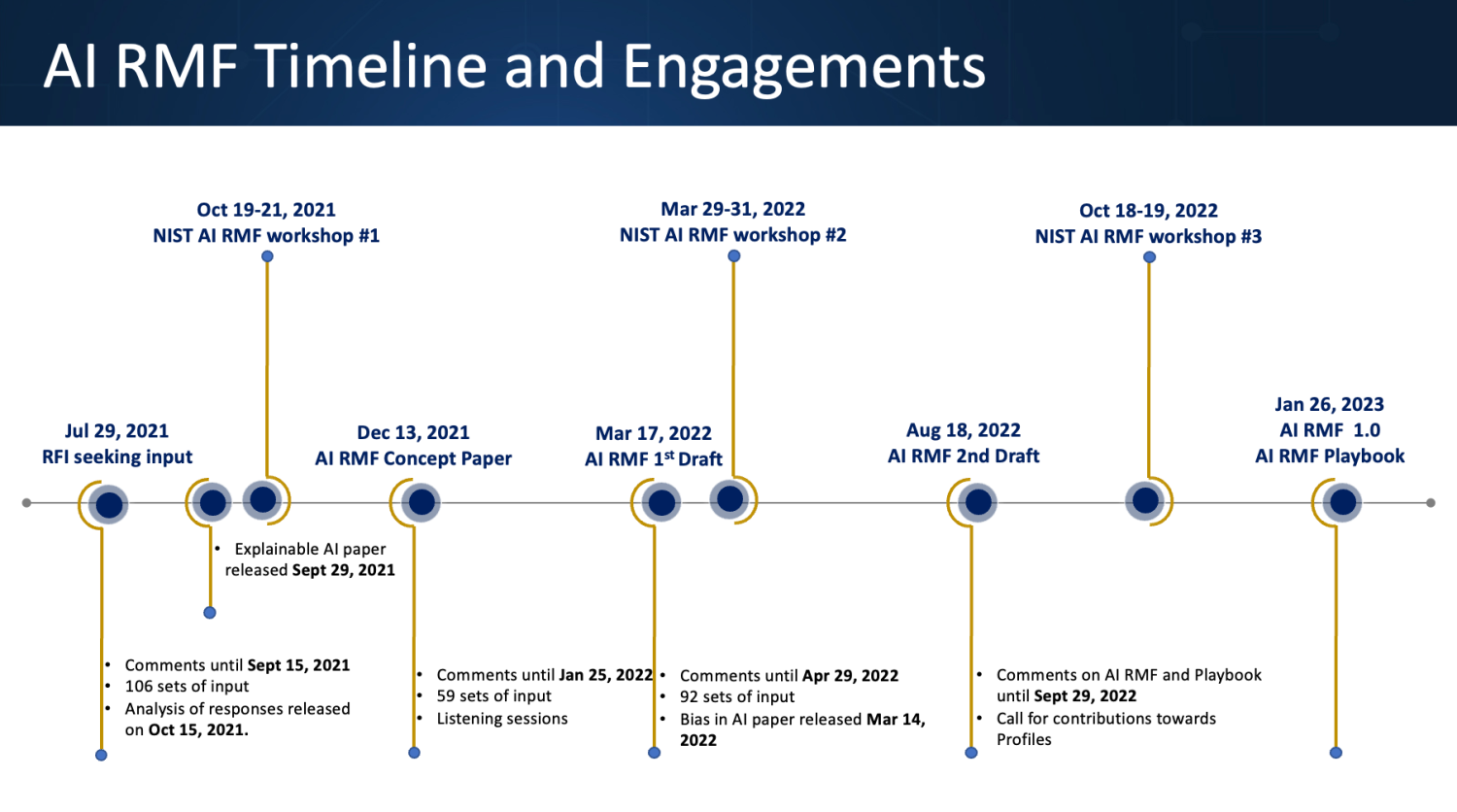

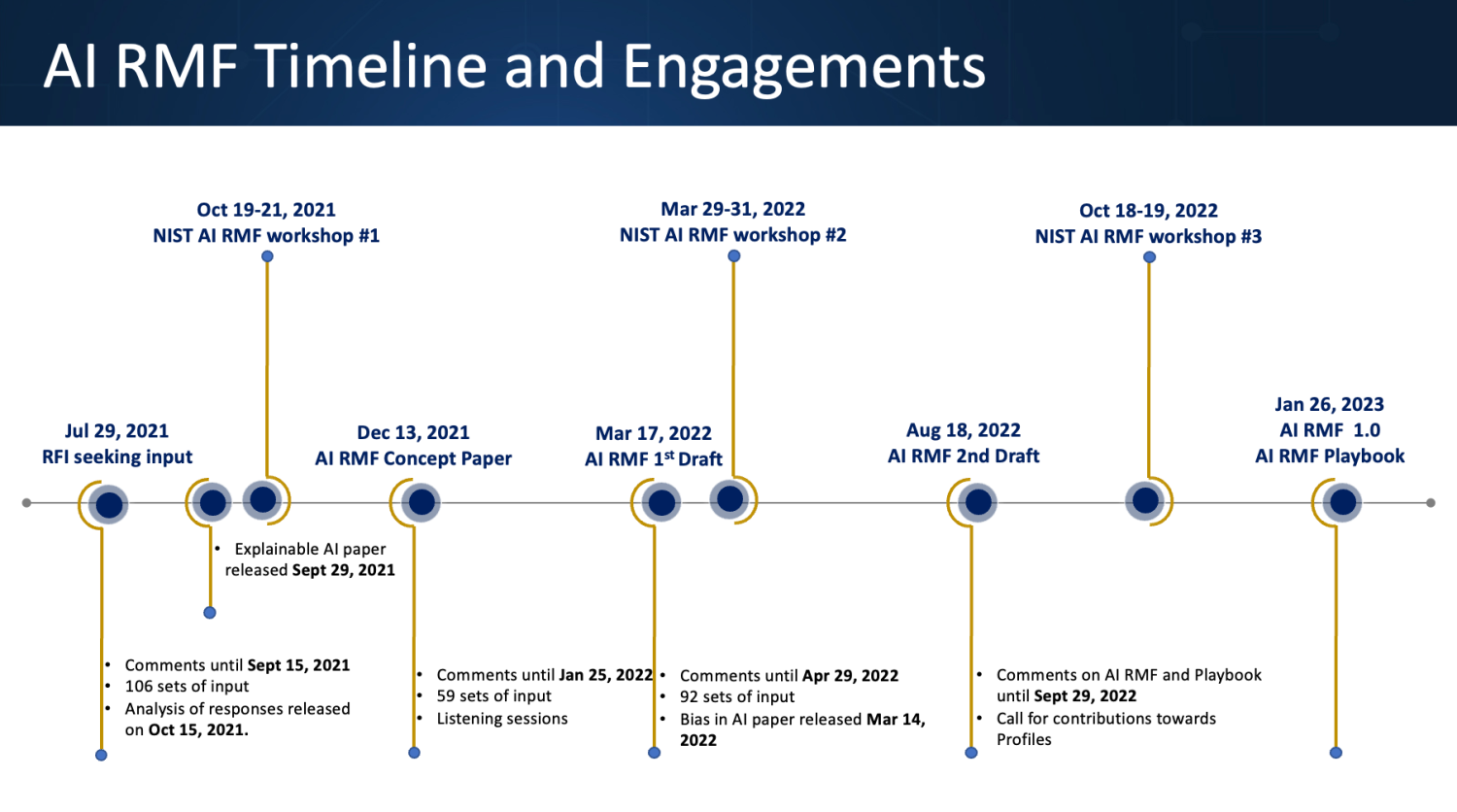

Được phát hành vào ngày 26/01/2023, Khung này đã được phát triển qua một quy trình hướng đồng thuận, mở, minh bạch và cộng tác, bao gồm một Yêu cầu về Thông tin, vài phiên bản phác thảo dành cho các bình luận công khai, nhiều hội thảo, và các cơ hội khác để cung cấp đầu vào. Nó được dự kiến xây dựng dựa trên, phù hợp với, và hỗ trợ cho những người khác trong các nỗ lực quản lý rủi ro AI.

Cùng với Sổ tay AI RMF của NIST cũng đã được NIST xuất bản cùng với một Lộ trình AI RMF, AI RMF Crosswalk, và các Quan điểm khác nhau. Ngoài ra, NIST đang tạo ra một video giải thích về AI RMF.

Vào ngày 30/03/2023, NIST đã công bố thành lập Trung tâm Tài nguyên AI Tin cậy và có Trách nhiệm (Trustworthy and Responsible AI Resource Center), nó sẽ tạo thuận lợi cho việc triển khai, và điều chỉnh phù với mức quốc tế với AI RMF.

Để xem các bình luận nhận được về các bản thảo trước đó của AI RMF và các Yêu cầu về Thông tin, vui lòng xem trang Phát triển AI RMF.

Các tài liệu trước đó

-

Second draft of the AI Risk Management Framework (18/08/2022)

-

Initial draft of the AI Risk Management Framework (17/03/2022)

-

Concept paper to help guide development of the AI Risk Management Framework (13/12/2021)

-

Brief summary of responses to the 29/07/2021, RFI (15/10/2021)

-

Draft -Taxonomy of AI Risk (15/10/2021)

-

AI Risk Management Framework Request for Information (29/07/2021)

On April 29, 2024, NIST released a draft publication based on the AI Risk Management Framework (AI RMF) to help manage the risk of Generative AI. The draft AI RMF Generative AI Profile can help organizations identify unique risks posed by generative AI and proposes actions for generative AI risk management that best aligns with their goals and priorities. Developed over the past year and drawing on input from the NIST generative AI public working grouof more than 2,500 members, the guidance centers on a list of 12 risks and more than 400 actions that developers can take to manage them. More information is available here.

On April 30, 2024, NIST posted a crosswalk between the NIST AI Risk Management Framework (AI RMF) and the Japan AI Guidelines for Business (AI GfB.)

In collaboration with the private and public sectors, NIST has developed a framework to better manage risks to individuals, organizations, and society associated with artificial intelligence (AI). The NIST AI Risk Management Framework (AI RMF is intended for voluntary use and to improve the ability to incorporate trustworthiness considerations into the design, development, use, and evaluation of AI products, services, and systems.

Released on January 26, 2023, the Framework was developed through a consensus-driven, open, transparent, and collaborative process that included a Request for Information, several draft versions for public comments, multiple workshops, and other opportunities to provide input. It is intended to build on, align with, and support AI risk management efforts by others.

A companion NIST AI RMF Playbook also has been published by NIST along with an AI RMF Roadmap, AI RMF Crosswalk, and various Perspectives. In addition, NIST is making available a video explainer about the AI RMF.

On March 30, 2023, NIST launched the Trustworthy and Responsible AI Resource Center, which will facilitate implementation of, and international alignment with, the AI RMF.

To view public comments received on the previous drafts of the AI RMF and Requests for Information, see the AI RMF Development page.

Prior Documents

Dịch: Lê Trung Nghĩa

letrungnghia.foss@gmail.com